Part II: Kernel Architecture

Architecture Overview

QuantumRT is POSIX native Real-Time Kernel which implements a subset of the POSIX.1-2024 standard. In addition, QuantumRT incorporates advanced real-time features such as Precise Scheduling and Memory Protection.

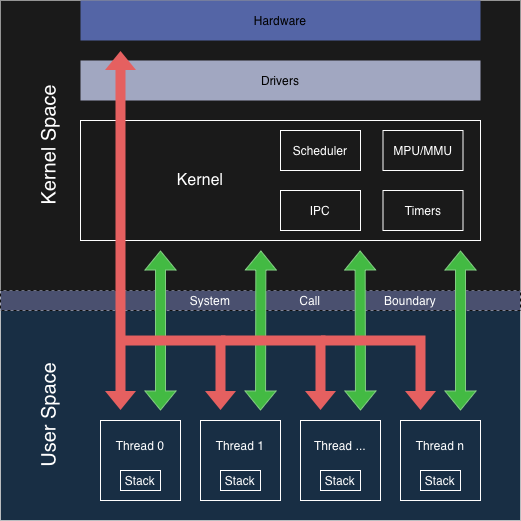

Kernel Architecture diagram.

Execution Model

QuantumRT operates in two execution levels: Privileged and Unprivileged. Privileged code has unrestricted access to all system resources, while unprivileged code is restricted from modifying critical kernel or hardware registers.

When Memory Protection is disabled, all code executes in privileged mode and can access the entire memory map.

When Memory Protection is enabled, QuantumRT runs the kernel, idle thread, and all interrupt service routines in privileged mode. User threads may execute in unprivileged mode, isolating them from kernel memory, other thread stacks, and preventing direct access to protected peripherals. User threads may also run in privileged mode if required.

Memory Model

Each thread and the kernel has its own stack memory, isolated from each other.

ARM Cortex-M processors implement Process Stack Pointer (PSP) and Main Stack Pointer (MSP) to facilitate stack separation. Threads (including idle thread) operate using PSP, while the kernel and ISRs utilize MSP.

Boot & Kernel Bring-Up

QuantumRT is shipped with default BSP for ARM Cortex-M platforms which handles necessary low-level initialization for the kernel operation.

BSP must be initialized with bsp_init().

Before booting the kernel, the System Timer must be configured by calling qrt_systimer_configure().

The kernel is initialized and started by calling qrt_kernel_boot(), which takes thread attributes for the startup thread as parameters.

After the kernel has started, the scheduler begins managing thread execution and the startup thread begins running.

More threads can be created after the kernel has started.

The kernel handles Memory Protection Unit (MPU) configuration if Memory Protection is enabled (QRT_CFG_MPU_ENABLE).

Timers

System Timer

The kernel requires a hardware timer to serve as the primary timekeeping mechanism. The System Timer provides time-based services, ensuring accurate delays, timeouts, and scheduling. The accuracy of timing services is directly dependent on the frequency of the hardware System Timer.

The System Timer interrupt is configured with the highest priority to maintain accurate timing and ensure no ticks are lost during interrupt handling. To satisfy this requirement, the System Timer interrupt must be the only interrupt assigned the highest priority level.

Configuration

The kernel requires integration with a hardware timer.

The user must configure the hardware timer to trigger interrupts on overflow and provide qrt_systimer_configure() with the following parameters:

A function for reloading the hardware timer

A function for reading the hardware timer

The maximum period of the hardware timer in ticks

The frequency of the hardware timer in Hertz (Hz)

The hardware timer’s interrupt request number

The hardware timer’s interrupt handler must invoke qrt_systimer_handler().

The kernel manages the interrupt controller configuration for the hardware timer.

Precise Scheduling

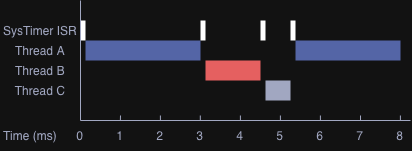

The kernel implements Precise Scheduling, dynamically loading the System Timer with the next scheduled event’s deadline instead of relying on fixed system ticks. This approach eliminates unnecessary timer interrupts, improving accuracy, reducing CPU overhead, and enhancing energy efficiency compared to traditional Tick-Based Scheduling.

Precise Scheduling timing diagram.

Tick-Based Scheduling

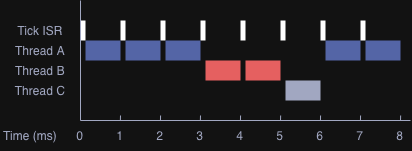

For compatibility with legacy code or applications designed for Tick-Based Scheduling systems, QuantumRT provides an API for querying the system time in ticks.

The system time can be retrieved with qrt_systimer_getmonotonic().

Internally, QuantumRT uses Precise Scheduling. The tick count is derived from the actual elapsed time, not generated by periodic timer interrupts.

Tick-Based Scheduling timing diagram.

Scheduler

The scheduler manages thread execution, ensuring fair resource distribution, responsiveness, and prioritized handling of critical threads. The kernel uses preemptive scheduling, allowing it to interrupt a running thread whenever a higher priority thread becomes ready to execute.

Priority-Based Scheduling

The kernel uses preemptive priority-based scheduling, a scheduling algorithm designed to allocate processing time among threads based on their priority level, where a higher value represents higher priority.

To enable Priority-Based scheduling for a thread, pthread attribute must be set with SCHED_FIFO with pthread_attr_setschedpolicy().

Round-Robin Scheduling

The kernel provides Round-Robin scheduling for threads of equal priority, ensuring fair distribution of CPU time among threads without strict real-time constraints, such as background tasks. Threads execute in rotation, each receiving a fixed execution time slice. When the time slice expires or a context switch occurs, the thread is returned to the ready queue, and another thread at the same priority level executes. However, the kernel does not guarantee a thread always completes its full time slice.

To enable Round-Robin scheduling for a thread, pthread attribute must be set with SCHED_RR with pthread_attr_setschedpolicy().

Round-Robin scheduling is a superset of Priority-Based scheduling, meaning that Round-Robin scheduling also considers thread priority levels. Round-Robin scheduling is the default scheduling policy.

Interrupts and Scheduling

Interrupt Service Routines (ISRs) respond to hardware events by temporarily interrupting thread execution.

QuantumRT does not perform scheduling directly within ISR context.

Instead, QuantumRT provides deferred kernel calls qrt_defer_call() for ISRs to request kernel services after the ISR has completed.

This approach minimizes interrupt latency and ensures predictable execution.

Yielding

Threads do not need to explicitly yield, but the scheduler automatically preempts lower-priority threads and manages execution order based on priority and scheduling policy.

Threads can voluntarily yield the processor using sched_yield(), allowing other threads of equal priority to execute.

Yielding is particularly useful when a thread has completed its immediate work and wants to allow other threads to run.

Priority Inversion

Priority inversion occurs when a lower-priority thread indirectly prevents a higher-priority thread from executing, effectively reversing the intended priority order. Priority inversion can be bounded or unbounded:

Bounded priority inversion occurs when a high-priority thread is waiting for a resource held by a low-priority thread. The duration of inversion is bounded because it ends as soon as the low-priority thread releases the resource.

Unbounded priority inversion occurs if the duration of inversion becomes unpredictable due to intermediate-priority threads preempting the low-priority thread holding a critical resource needed by a high-priority thread.

QuantumRT mitigates priority inversion through Priority Inheritance (PI) and Priority Ceiling (PC) protocols when using mutexes. See POSIX Thread Mutex for details on configuring these protocols.

Idle Thread

The kernel creates a idle thread that runs when no other threads are in the ready state. The idle thread always runs at the lowest priority level and System Calls cannot be invoked from it. Idle thread executes in a minimal infinite loop unless power-saving mode is enabled.

If power-saving mode is enabled, the idle thread places the processor into a low-power state when no other threads are ready.

Power-saving mode can be enabled with configuration option QRT_CFG_IDLE_DEEP_SLEEP_ENABLE.

An idle thread hook can be registered and unregistered with qrt_kernel_idlehookregister() to execute custom code during idle periods.

Care must be taken to ensure the hook function does not compromise the system security as it executes in kernel context.

Floating-Point Support

Some supported processor cores include a FPU (Floating-Point Unit), introducing additional registers that must be managed during context switching. Stacking floating-point registers increases thread stack size, adds interrupt latency, and extends context switching time because the registers must be saved and restored.

The kernel supports stacking floating-point registers and it can be enabled per thread with call to qrt_fpu_ctxenable() and can be disabled with qrt_fpu_ctxdisable().

FPU support can be enabled with configuration option QRT_CFG_FPU_ENABLE, and floating-point stacking can be enabled with QRT_CFG_FPU_STACKING_ENABLE.